It runs as follows (notice the extra escape char before \n and inside enclosed by”:Ġ * * * * mysql -u User -pPassword -hClusterDomainName -e “use myDatabase truncate myDatabse.engine_triggers LOAD DATA FROM S3 ‘s3://bucket/file. Amazon Redshift database SQL tutorial to split string delimited data stored on table. LOAD DATA FROM S3 ‘s3:// bucket /sample_triggers.csv’ Often you receive a CSV string that you need to convert to rows. LOAD DATA FROM S3 ‘s3://bucket/sample_triggers.csv’ INTO TABLE engine_triggers fields TERMINATED BY ‘,’ ENCLOSED BY ‘”‘ ESCAPED BY ‘”‘ LOAD DATA FROM S3 ‘s3://bucket/sample_triggers.csv’ INTO TABLE engine_triggers fields terminated by ‘,’ LINES TERMINATED BY ‘\n’ IGNORE 1 lines Load into examples (ignoring header or using quoted fields_ : Then I right clicked on table store and chose Import/Export Data. The AWS database is connected successfully.

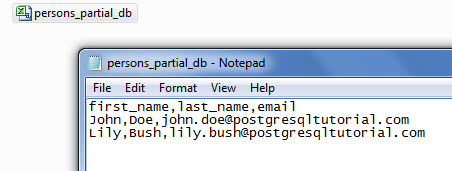

Use the following command to copy the files into your RDS: psql -h -U. Export PostgreSQL Table To CSV File SELECT FROM persons COPY persons TO C:tmppersonsdb.csv DELIMITER, CSV HEADER COPY persons(firstname. I am following the instruction in this article to import csv using PgAdmin. aws s3 sync s3://bucket/file.csv /mydirectory/file.csv.

GRANT LOAD FROM S3 ON *.* TO ‘ user domain-or-ip-address ‘ Created a table called 'store' under schema 'public' with the following columns: Now I want to import a csv from local to the AWS postgres database.

Set your cluster to use the role (with access to s3)Īssuming your DB is on private LAN, Setup VPC endpoint to s3 (always a good practice to setup an endpoint anyway):Īdd to Aurora parameter group – aurora_load_from_s3_role- the ARN of the role with s3 access, and reboot the Auroroa DB.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed